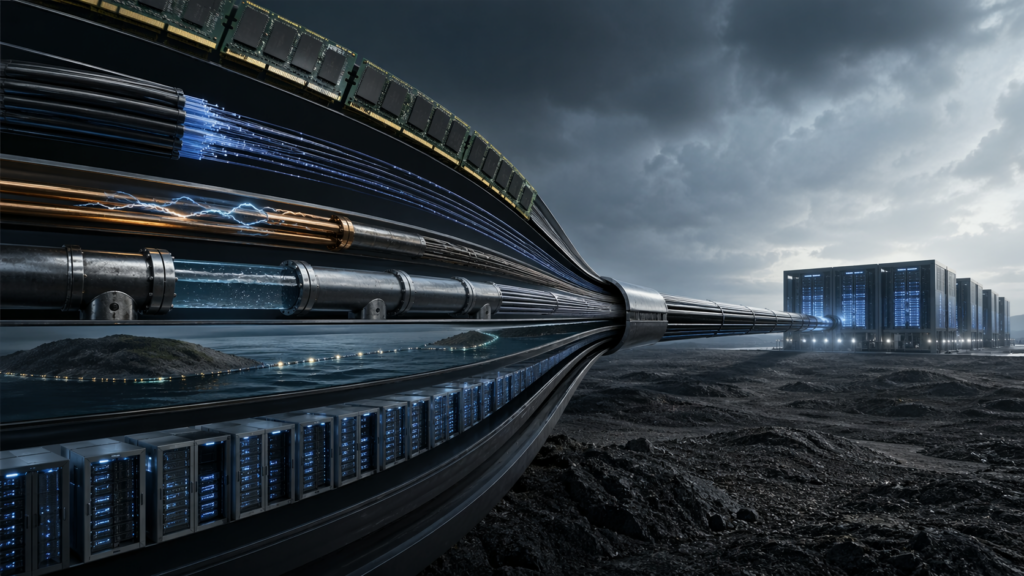

The most important AI risks in 2026 are not at the model layer. They sit lower down, in memory, networking, power, cloud access, and the physical systems that keep AI running.

That is where the real leverage is. These bottlenecks decide who can scale, who gets delayed, and who ends up dependent on someone else’s infrastructure.

Why this matters

Most AI coverage still revolves around models: who released the latest one, who tops a benchmark, who has the best chatbot. Those things matter, but they can distract from the harder and more durable story.

AI runs on a stack of scarce inputs. It needs advanced memory, high-speed networking, reliable electricity, cooling, secure data centers, cloud capacity, and trusted identity systems. If any one of those layers breaks down, progress slows. If a few of them tighten at once, they become a form of power.

That is why the central question for 2026 is changing. It is no longer just who can build the best model. It is who can get the chips, move the data, power the systems, secure the sites, and keep access open under stress.

The main choke points

High-bandwidth memory

High-bandwidth memory, or HBM, is one of the clearest bottlenecks in the AI stack. Advanced AI chips depend on it, and supply is tight.

That matters because even if GPUs are available, systems cannot scale properly without the memory to feed them. Large firms can lock in supply with long contracts and capital. Smaller labs, public institutions, and second-tier firms usually cannot. In practice, that means memory shortages can decide who gets to grow and who gets stuck waiting.

Networking and interconnects

It is no longer enough to own a large number of chips. Those chips have to talk to one another at very high speed and very low latency.

That is why networking and interconnects are becoming so important. Frontier AI systems depend on optical links, fast switches, and specialized data movement. If those systems lag, the whole cluster underperforms. This is one reason raw GPU counts can be misleading: two firms may have similar hardware, but the one with better interconnects can train larger systems faster and more efficiently.

Power and cooling

Power may be the most underrated limit on AI growth. AI data centers need enormous amounts of electricity, and they generate huge amounts of heat.

This creates a hard physical ceiling. If the grid cannot deliver enough power, if local permits stall, or if cooling systems cannot keep up, expansion slows or stops. That gives an edge to places with abundant electricity, faster permitting, and the ability to build cooling and backup power quickly. In 2026, energy geography may matter as much as software talent.

Data-center siting and physical security

Data centers are often treated like commercial real estate. They are better understood as strategic infrastructure.

Where they are built matters. So does whether they can be defended, insured, powered, cooled, and connected during a crisis. A country may have ambitious AI plans, but if its data centers sit in exposed or unstable locations, that capacity is fragile. In that sense, siting is not a side issue. It is part of AI sovereignty itself.

Chip equipment and export controls

Export controls are now one of the main ways states shape the AI race. The point is not always to stop development outright. Often it is to slow it, raise its cost, or make scaling harder over time.

That makes chip tools and manufacturing equipment just as important as the chips themselves. Restrictions on lithography equipment, packaging, or key inputs can quietly degrade a country’s AI trajectory. The effect may not show up in one dramatic moment. More often it appears as delay, higher cost, and forced substitution.

Cloud access and compute allocation

Cloud access is easy to overlook because it looks like a business service. In reality, it is becoming a geopolitical asset.

A small number of firms control much of the compute needed to train and deploy advanced AI systems. That means access is concentrated, and concentration creates dependence. If a government, university, or company relies on foreign hyperscalers, it also relies on foreign legal systems, foreign political decisions, and foreign capacity priorities. That is not just a market condition. It is a strategic exposure.

Identity and trust infrastructure

Another underreported choke point is trust. AI is making it easier to imitate voices, faces, documents, and identities at scale.

That puts pressure on the systems that banks, governments, and public services use to verify who is real. Once the verification layer weakens, the damage goes beyond fraud. Institutions become slower, more suspicious, and more expensive to run. In the worst case, societies end up defending themselves against synthetic people as much as against traditional cyberattacks.

Undersea and terrestrial connectivity

AI depends on physical data routes, including undersea cables and terrestrial backbones. These are easy to ignore because they sit outside the glamour of AI itself.

But they are part of the system’s nervous network. If routes are cut, rerouted, degraded, or caught in geopolitical conflict, cloud access and AI services can suffer quickly. For countries at the edge of the network, or for places with limited route diversity, this is a serious vulnerability.

What makes these risks easy to miss

These choke points are underreported for a simple reason: they are less visible than model launches. A new chatbot is easy to explain. A shortage in HBM or a delay in substation upgrades is not.

But the less visible story is often the more important one. Scarce inputs and infrastructure constraints are what make power durable. They determine who can keep scaling, who can be slowed, and who becomes dependent on someone else’s system.

What to watch in 2026

Several warning signs are worth tracking closely:

- Longer lead times for HBM and other advanced memory.

- More investment and consolidation in photonics and high-speed interconnects.

- Grid bottlenecks, water disputes, and local fights over AI data-center expansion.

- New export-control rounds aimed at chips, equipment, or cloud-mediated access.

- More demands for sovereign cloud and local control over compute.

- Rising fraud losses tied to deepfakes, synthetic identities, and impersonation.

- Cable disruptions or geopolitical tension near major data routes.

Bottom line

The most important AI choke points in 2026 are not the ones that get the most headlines. They are the hidden constraints that sit underneath the model layer and determine whether AI systems can actually be built, scaled, and sustained.

That is where the real contest is moving. The future of AI power will depend less on who has the flashiest model and more on who controls the stack beneath it.

Bibliography

- Brookings Institution. “Global Energy Demands within the AI Regulatory Landscape.” April 13, 2026.

- CSIS. “New Momentum, Old Problems: Transatlantic Export Control Considerations.” April 14, 2026.

- Data Center World. “2026 Data Center Trends: AI, Cooling & Power Insights.” February 17, 2026.

- Economy.ac. “Silicon Leverage: Why AI Chip Export Controls Are Really About…” March 28, 2026.

- EE Times. “How AI and Geopolitics Forge a Memory Market Crisis.” March 24, 2026.

- EnkiAI. “Memory Shortage 2026: How AI Will Cause a Supply Crisis.” January 27, 2026.

- Fintech Global. “How AI and Deepfakes Are Reshaping Identity Fraud in 2026.” March 19, 2026.

- Fortune. “AI’s Memory Chip Shortage Is Quietly Taxing the Entire Economy.” March 18, 2026.

- Futurum Group. “NVIDIA’s $4B Optics Bet Signals Photonics as AI’s Next Bottleneck.” March 2, 2026.

- ID.me. “The Identity Fraud Landscape: 2026 and Beyond.” March 31, 2026.

- Octopart Pulse. “How AI Broke the Memory Market: Inside the 2024–2026 DRAM…” March 12, 2026.

- OFC Conference. “Optical Networking for AI Datacenters: Technology Enablers and…” Accessed April 22, 2026.

- Oxford Internet Institute, University of Oxford. “The Political Geography of AI Infrastructure.” Accessed April 22, 2026.

- Reuters. “AI’s Demand for Data Could Cause Tight Storage Chip Supplies, Solidigm Executive Says.” March 19, 2026.

- Reuters. “AI’s Memory Chip Champion Has a Value Problem.” February 19, 2026.

- Reuters. “Investors Press Amazon, Microsoft and Google on Water, Power Use in US Data Centers.” April 5, 2026.

- Reuters. “Memory Chipmakers Rise as Global Supply Shortage Whets Investor Appetite.” January 5, 2026.

- Reuters. “Pricier iPhones? Global Memory Chip Crunch Puts Spotlight on Apple.” February 6, 2026.

- Reuters. “Smartphone Market Set for Biggest-Ever Decline in 2026 on Memory Price Surge, IDC Says.” February 26, 2026.

- Reuters. “Surging Memory Chip Prices Dim Outlook for Consumer Electronics Makers.” January 21, 2026.

- Reuters. “US AI Boom Faces Electric Shock.” February 25, 2026.

- Reuters. “US Power Use to Beat Record Highs in 2026 and 2027 as AI Use Surges, EIA Says.” April 6, 2026.

- Semafor. “Data Centers Under Fire Test Gulf Sovereign AI Ambitions.” April 12, 2026.

- Tech Policy Press. “Technology Restrictions Have Become a Central Instrument of Economic Statecraft.” April 12, 2026.

- The Record. “Taiwan Using AI to Fight Disinformation Campaigns, Former Minister…” November 23, 2022.

- Tom’s Hardware. “Photonics and High-Speed Data Movement Is the Next Big AI Bottleneck.” February 2, 2026.

- TruthScan. “Generative AI: The New Frontier of Fraud in Identity Verification (2026).” February 4, 2026.