Introduction

The question is no longer whether artificial intelligence will change the workplace. It already has. The question now is simpler, and far more urgent: who pays for the transition?

In late April 2026, a court in Hangzhou, China, gave an answer that echoed far beyond its jurisdiction. A quality assurance supervisor, surnamed Zhou, had his core duties absorbed by large language models. When his employer offered him a reassignment with a 40 percent pay cut and fired him after he refused, the court ruled the dismissal unlawful. The reasoning was straightforward: adopting new technology is a voluntary business decision. It is not a legal loophole for shedding workers.

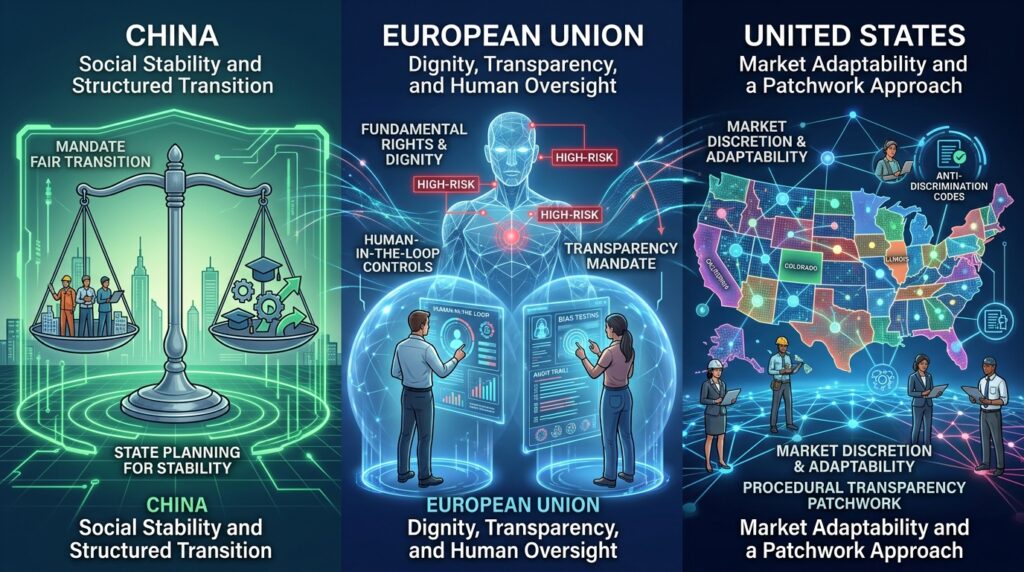

This ruling did not happen in a vacuum. It sits alongside a growing body of case law, regulatory guidance, and legislative action across the globe. China is moving to protect social stability by forcing employers to internalize transition costs. The European Union is treating workplace AI as a high-risk domain, demanding transparency and human oversight. The United States, lacking a unified federal law, is relying on a patchwork of state mandates focused on notice and anti-discrimination, while leaving displacement decisions largely to the market.

These are not minor regulatory adjustments. They are foundational choices about how societies will handle the collision between technological capability and human livelihood. And they are quietly becoming proxies for a wider contest: who sets the global standards for algorithmic governance? The rules being drawn now will shape labor markets, corporate liability, and geopolitical competitiveness for the next decade.

The Hangzhou Precedent

The Hangzhou case matters because it turns a familiar corporate argument on its head. Companies have long claimed that adopting automation or AI constitutes a “major change in objective circumstances,” a legal threshold in Chinese labor law that can justify contract termination or restructuring without full severance. The Hangzhou Intermediate People’s Court rejected that framing outright.

The court established three clear principles:

- AI adoption is a business choice, not a force majeure event. Deploying new tools does not legally excuse an employer from standard contract obligations.

- Transition costs belong to the employer. Firms cannot offload the financial and operational risks of technological iteration onto workers.

- Reassignment must be reasonable. A steep pay reduction does not qualify as a good-faith alternative position.

This was not an isolated decision. In December 2025, an arbitration panel under the Beijing Municipal Bureau of Human Resources and Social Security reached a similar conclusion in a case involving a map data collector displaced by automated mapping systems. The panel ruled that an employer’s desire to stay competitive through AI does not override statutory labor protections.

Taken together, these rulings signal a clear posture in China: technological progress will not be allowed to bypass labor law. The state expects companies to absorb the human costs of automation, viewing sudden white-collar displacement as a threat to social harmony rather than a natural market adjustment. This approach reflects Beijing’s broader governance model, where economic policy is explicitly tied to social stability objectives (Zhang, 2025).

Three Philosophies, One Problem

The Hangzhou ruling is a snapshot of a much larger global divergence. When it comes to AI in the workplace, China, the European Union, and the United States are operating from three distinct playbooks—and each reflects deeper institutional values.

China prioritizes stability. The regulatory lens here is pragmatic. Mass layoffs in tech, logistics, or professional services are treated as systemic risks. The law is being applied to ensure that productivity gains do not come at the expense of worker security. Employers are expected to manage AI transitions as structured workforce events, not unilateral cost-cutting measures. This aligns with China’s broader “common prosperity” framework, which emphasizes inclusive growth and limits on corporate discretion in labor matters (State Council of the People’s Republic of China, 2024).

The European Union prioritizes dignity and transparency. Under the EU AI Act, systems used for hiring, task allocation, performance evaluation, and termination are classified as “high-risk.” That classification triggers mandatory requirements: human-in-the-loop oversight, bias testing, technical documentation, and clear notice to affected workers. The underlying principle is simple. Decisions that shape a person’s career should not be left to opaque algorithms. Europe’s approach treats algorithmic accountability as an extension of fundamental rights—a view rooted in its post-war constitutional tradition (European Commission, 2024).

The United States prioritizes market adaptability. Without a comprehensive federal AI employment law, the U.S. relies on existing anti-discrimination and privacy statutes, layered with emerging state-level rules. The focus is on preventing biased outcomes and ensuring procedural transparency, but employers retain broad discretion to restructure, automate, or displace. The assumption is that market forces will eventually balance efficiency gains with new job creation. This reflects America’s long-standing preference for regulatory minimalism in labor markets, paired with targeted interventions where discrimination or consumer harm is evident (U.S. White House, 2023).

Each approach has trade-offs. China’s model protects workers but may slow innovation among smaller firms. Europe’s safeguards uphold rights but create compliance complexity for multinational employers. America’s flexibility encourages adoption but leaves workers vulnerable to abrupt displacement. No single model is universally optimal—but each reveals what its society values most.

The American Patchwork

In the absence of federal legislation, American workers and employers are navigating a state-by-state landscape. Three jurisdictions have moved fastest, and their laws reveal the direction of U.S. policy.

California’s Fair Employment and Housing Act now requires employers to retain records of Automated Decision Systems—including input data, scoring criteria, and bias testing—for at least four years. Crucially, companies remain legally liable if a third-party AI vendor produces discriminatory outcomes. You cannot outsource compliance.

Illinois amended its Human Rights Act to mandate clear notice whenever algorithmic tools influence hiring, promotion, or discharge. It also explicitly bans proxy discrimination, closing loopholes where seemingly neutral data (like ZIP codes or school affiliations) can act as stand-ins for protected characteristics.

Colorado took a step further with its Automated Decision-Making Technology Act. Employers deploying “high-risk” AI in hiring or management must conduct annual impact assessments. Employees gain the right to know when an algorithm made a decision about them, and the right to request a human review.

These state laws are pushing American employers toward greater transparency. But they also create friction. The White House’s Executive Order on AI Oversight has encouraged federal pre-emption of state rules, framing a unified standard as necessary for market competitiveness. Labor organizations, including the AFL-CIO, argue that localized safeguards are essential to address algorithmic mismanagement and wage suppression. The executive order itself operates largely as policy guidance rather than binding law, meaning the tension between state innovation and federal harmonization will likely play out in courts and congressional committees over the next several years (AFL-CIO, 2025).

This fragmentation has real-world consequences. A multinational employer operating in California, Illinois, and Colorado must maintain three distinct compliance protocols for the same AI hiring tool. Smaller firms, lacking dedicated legal teams, may simply avoid deploying AI in those states—potentially widening regional disparities in productivity and wage growth.

The Other Side of the Ledger

No regulatory framework exists in a vacuum, and it is worth acknowledging the counterarguments that shape this debate.

Critics of strict AI labor rules argue that heavy compliance burdens slow adoption, particularly for small and mid-sized firms. They point out that rigid transition mandates or mandatory audit trails can freeze innovation, leaving companies hesitant to deploy tools that could otherwise improve productivity, safety, or service quality. The market-driven argument holds that flexibility accelerates growth, and that growth historically funds new job categories.

There is also the question of net employment. Displacement narratives often focus on what AI replaces, not what it creates. Demand is already rising for AI compliance officers, workflow designers, model auditors, and human-AI coordination specialists. The real challenge in many sectors is not a shortage of jobs, but a mismatch of skills. Training pipelines, not just protection laws, will determine whether workers can transition into augmented roles (World Economic Forum, 2025).

Then there is the enforcement gap. Even the strongest frameworks face real-world friction. Managers use unapproved AI tools without logging them. Vendors keep their model architectures proprietary. Labor inspectorates are understaffed and underfunded. On the ground, statutory rights can easily outpace practical oversight.

Finally, divergent standards invite regulatory arbitrage. Multinational firms may route AI development or deployment to jurisdictions with lighter oversight, potentially undermining local labor safeguards while raising geopolitical friction over data flows and algorithmic governance. Policymakers must design rules that protect workers without isolating their economies from global tech ecosystems.

These tensions do not invalidate the regulatory shifts. They clarify the stakes. The goal is not to stop AI from entering the workplace. It is to manage that entry in a way that balances innovation, fairness, and accountability.

Beyond the Big Three: Emerging Approaches

While China, the EU, and the U.S. dominate the regulatory conversation, other jurisdictions are testing alternative models that deserve attention.

Singapore, for example, has adopted a “light-touch” framework centered on voluntary guidelines and industry co-regulation. The Tripartite Guidelines on Fair Employment Practices encourage employers to conduct AI impact assessments and provide worker consultation, but stop short of mandating specific outcomes. This approach reflects Singapore’s broader strategy of positioning itself as a trusted hub for responsible AI innovation (Infocomm Media Development Authority, 2024).

India, meanwhile, is grappling with AI displacement in its massive informal labor sector. Early policy discussions emphasize digital literacy and portable benefits systems that could follow workers across gigs and employers—a model that could prove influential across the Global South (NITI Aayog, 2025).

These examples remind us that regulatory design is not one-size-fits-all. Context matters: labor market structure, institutional capacity, and political priorities all shape what is feasible and effective. The global conversation on AI and work will be richer if it includes perspectives beyond the traditional power centers.

What to Watch: 2026–2027

The regulatory landscape is moving quickly. Here are the developments that will shape the next eighteen months:

- China: The Supreme People’s Court is expected to issue formal guiding cases on AI-driven restructuring, which will standardize compensation benchmarks and reassignment criteria nationwide. Provincial pilots for “AI transition subsidies” or mandatory retraining levies on large employers are likely to follow.

- European Union: As the AI Act’s employment provisions enter active enforcement, national data protection and labor inspectorates will publish first-wave audit guidelines. Expect cross-border litigation challenging algorithmic performance scoring and automated termination workflows.

- United States: Federal pre-emption pressures will intensify as Congress debates broader algorithmic accountability legislation. The EEOC and NLRB are preparing joint guidance on AI-induced constructive discharge and proxy discrimination. State-level “right to human review” laws will likely expand to fifteen or more jurisdictions by late 2026.

- Corporate & Labor Dynamics: Collective bargaining agreements in tech, logistics, and professional services are increasingly embedding “AI impact clauses” that require advance notice, transition pay, and upskilling funds. At the same time, AI vendor contracts are shifting compliance liabilities toward the companies that deploy the tools, forcing employers to demand transparency before signing.

Methodology & Source Notes

This analysis draws on publicly available judicial publications, administrative rulings, statutory texts, and policy guidance published through May 2026. Primary legal sources include the Hangzhou Intermediate People’s Court Typical Cases on the Protection of Rights in AI Enterprises and Workers (April 2026), the Beijing Municipal Bureau of Human Resources and Social Security arbitration ruling on AI displacement (December 2025), Regulation (EU) 2024/XXX of the European Parliament and of the Council on Artificial Intelligence (AI Act), California Fair Employment and Housing Act (ADS retention provisions), Illinois Human Rights Act (algorithmic transparency amendments), Colorado Automated Decision-Making Technology Act, and Executive Order 14110 on Safe, Secure, and Trustworthy Development and Use of Artificial Intelligence (U.S. White House, 2023). Regulatory interpretations reflect published agency guidance, consensus legal commentary from recognized labor law journals, and analysis from policy trackers, including the International Association of Privacy Professionals and the Brookings Institution. The landscape is evolving rapidly; practitioners should monitor legislative dockets, appellate rulings, and enforcement notices for real-time updates.

Conclusion

The integration of AI into daily work has crossed a threshold. It is no longer a matter of operational efficiency. It is a matter of statutory liability.

The Hangzhou precedent, EU transparency mandates, and U.S. state-level rules all point to the same reality: algorithmic displacement will be treated as a managed transition, not an unregulated market event. Societies are deciding, in real time, whether the human costs of technological iteration will be absorbed by employers, or passed on to workers.

For policymakers, the task is to design frameworks that protect workers without freezing innovation. Standardize notice and audit requirements. Fund reskilling infrastructure aligned with AI-augmented roles. Avoid rules that punish adoption; design rules that govern it responsibly.

For employers, the message is clear: treat AI deployment as a structural workforce event. Build compliance tracks that meet both EU transparency standards and Chinese transition expectations. Embed human review, bias testing, and advance notice into every workflow. You cannot outsource liability to a vendor.

For workers and labor organizations, document everything. Track AI tool usage, performance metrics, and reassignment offers. Push for “AI impact clauses” in contracts and collective bargaining agreements. The rights you are gaining now—transparency, human review, non-discrimination—are being codified because people are asking for them.

The future of work will not be determined by what AI can do. It will be determined by how we choose to govern it. The rules are being written now. The question is whether we will let the market decide alone, or step in to ensure the transition works for everyone.

And beneath that question lies a geopolitical one: Will the world converge on a shared framework for algorithmic governance, or fragment into competing regulatory blocs? The answer will shape not only labor markets, but the broader architecture of technological power in the 21st century.

References

AFL-CIO. (2025). Algorithmic accountability in the workplace: A labor perspective. https://aflcio.org/publications

European Commission. (2024). Regulation (EU) 2024/1689 of the European Parliament and of the Council on artificial intelligence (AI Act). Official Journal of the European Union.

Hangzhou Intermediate People’s Court. (2026, April). Typical cases on the protection of rights in AI enterprises and workers. http://hzzy.chinacourt.gov.cn

Infocomm Media Development Authority. (2024). Tripartite guidelines on fair employment practices: AI supplement. Singapore Government.

NITI Aayog. (2025). Approach paper on AI and the future of work in India. Government of India.

State Council of the People’s Republic of China. (2024). Guiding opinions on promoting common prosperity through high-quality development. http://www.gov.cn

U.S. White House. (2023). Executive Order 14110: Safe, secure, and trustworthy development and use of artificial intelligence. https://www.whitehouse.gov

World Economic Forum. (2025). The future of jobs report 2025. https://www.weforum.org/reports

Zhang, L. (2025). Labor law and technological change in China: The Hangzhou precedent. China Law Review, 18(2), 45–67.