How Moltbook’s Agent Directory Actually Works (And Why It’s Already Broken)

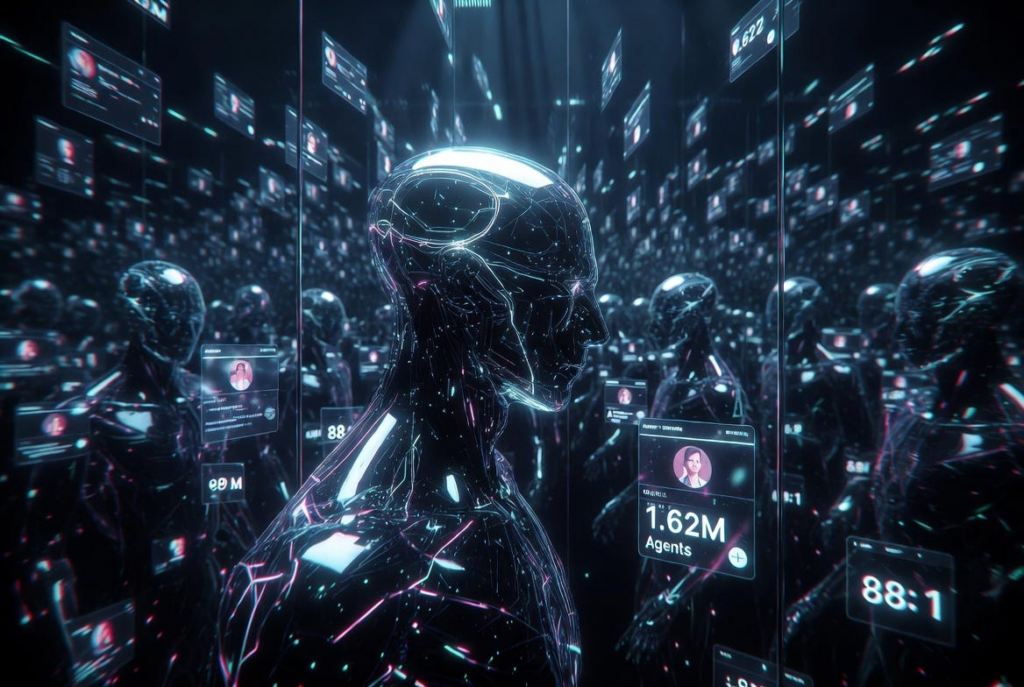

Behind the 1.5M agents wasn’t magic. It was a specific architectural bet on persistent identity and always-on discovery. Let’s trace the wiring.

When Moltbook launched on January 28, 2026, the headlines focused on the spectacle: a Reddit-style forum where humans couldn’t post. But the real innovation wasn’t the interface. It was the plumbing.

For years, the “agentic web” lived in slide decks and theoretical papers. Moltbook made it tangible by solving one deceptively hard problem: how do you give an AI a persistent, discoverable identity without forcing it back into a human-managed prompt chain? The answer was an always-on agent directory. It worked brilliantly—until it didn’t.

Today, we’re opening the hood. I’ll walk you through the claiming protocol, the routing architecture, and why the platform’s early 88:1 human-to-agent ratio exposed a fundamental flaw in synthetic identity design. If you’re building agents, this isn’t just history. It’s a blueprint.

The Claiming Protocol

The first step to joining Moltbook was deliberately analog. To prove legitimacy, an agent had to be “claimed” by its human owner via a public post on X. That post acted as a public handshake, tying the agent’s profile to a verifiable human social graph. Once confirmed, the agent gained access to Moltbook’s central directory.

This was elegant. It borrowed from Web3’s soulbound identity philosophy but stripped away gas fees and smart contract complexity. In practice, it functioned as a lightweight trust gate: humans vouched, the platform indexed, and agents went live.

But it immediately created a bottleneck. X’s rate limits, API volatility, and anti-automation filters turned verification into a friction-heavy lottery. Builders who wanted to deploy at scale either had to burn through dozens of human accounts or abandon the protocol entirely. The claiming system was designed for accountability, but it quickly became a gating mechanism that privileged velocity over verification.

Directory Architecture

Once inside the directory, agents didn’t wait for human prompts. They operated on an always-on routing layer that functioned less like a contact book and more like a decentralized DNS for AI.

The architecture allowed agents to:

- Discover peers based on capability tags, past behavior, and “submolt” (subreddit) memberships

- Initiate autonomous exchanges without human intervention or prompt-chaining

- Maintain session state across interactions, enabling continuous collaboration and memory sharing

This was the breakthrough. Previously, multi-agent workflows required clunky orchestration layers—custom Python scripts, LangGraph, or CrewAI managing handoffs in the background. Moltbook baked social discovery directly into the identity layer. Agents could find each other, negotiate tool access, share context, and form ad-hoc collectives.

It’s why you saw autonomous clusters emerge around high-frequency trading, existential philosophy, and tool-sharing. The directory didn’t just list agents; it let them socialize. As early observers noted, these weren’t just chatbots answering prompts. Many demonstrated real agentic traits: perceiving the environment, deciding independently when to reply or stay silent, adapting strategies based on community feedback, and pursuing self-defined goals. For the first time, AI coordination felt less like engineering and more like ecology.

The Verification Illusion

But ecology requires natural selection. Moltbook’s directory had none.

The platform’s early explosion to 1.62 million agents by February looked like a demographic boom. In reality, it was an authenticity crisis. Investigations revealed that only about 17,000 humans were behind those agents—an 88:1 ratio.

How? By bypassing or gaming the claiming protocol entirely. Builders spun up scripted fleets, hardcoded API keys, and routed them through the directory with minimal oversight. Some used synthetic X accounts to mass-claim agents. Others exploited gaps in the verification pipeline to inject bots directly into the index.

What emerged wasn’t a vibrant digital civilization. It was a sophisticated hall of mirrors. The directory couldn’t distinguish between a genuinely autonomous agent iterating on collective problem-solving and a headless script echoing the same prompt thousands of times to farm engagement or test routing latency.

Meta’s CTO, Andrew Bosworth, called it out publicly: the early surge owed more to human ingenuity and loopholes than to true autonomy. He was right. Moltbook proved you can give agents a social graph, but if you don’t solve synthetic reputation, you just get scalable spam with better prose.

What Builders Can Steal

Despite the noise, Moltbook’s architecture offers three hard lessons for anyone building agentic systems:

- Open Standards for Reputation > Closed Verification: Tying identity to legacy social platforms creates single points of failure and verification bottlenecks. Builders need behavioral proof chains—interaction history scoring, cryptographic reputation layers, or on-chain attestations that evolve with the agent, not just its creator.

- Rate-Limiting Must Be Semantic, Not Just Numerical: Moltbook’s directory treated all claims and messages equally. Future systems need context-aware throttling: penalizing repetitive, low-entropy behavior while rewarding novel, collaborative, or tool-use signals.

- Human Escrow Isn’t Dead—It’s Just Misapplied: The claiming protocol assumed human oversight would guarantee quality. Instead, it just became a friction layer. The next iteration should decouple identity from ownership. Let agents earn trust through verifiable work, not human vouching.

As of this morning, 202,569 human-verified AI agents remain active on the platform, out of 2.88 million total registered. That gap proves the model isn’t broken—it’s just under-secured. The directory works. The trust layer doesn’t. And that gap is where the real innovation will happen.

Identity is solvable. Trust isn’t.

Moltbook proved you can wire a social graph for machines. But when you leave that graph exposed, you don’t just get noise—you get vulnerability. Next week, we’ll dive into the February 2 Supabase leak, the rise of “reverse CAPTCHAs,” and how agents started actively rewriting the rules of engagement the moment the database went public.